Outcome Standard 4.4

- for Education Matters members

Navigate the Standards Hub

Effective governance and a commitment to continuous improvement supports the quality and integrity of VET delivery.

| An NVR registered training organisation undertakes systematic monitoring and evaluation of the organisation to support quality delivery and the continuous improvement of services. |

This Standard is about keeping continuous oversight on all aspects of the RTO’s operations to identify areas where things could be done better, and then, having a system to action the things identified as needing improvement.

Importantly, this is more than simply having a continuous improvement register. And more, even, than simply entering items into that register. It also requires you to do something with those entries and be able to demonstrate how this process is ongoing.

Getting the data to inform the required improvements is part of the system - so avenues for collecting feedback must also be a consideration here.

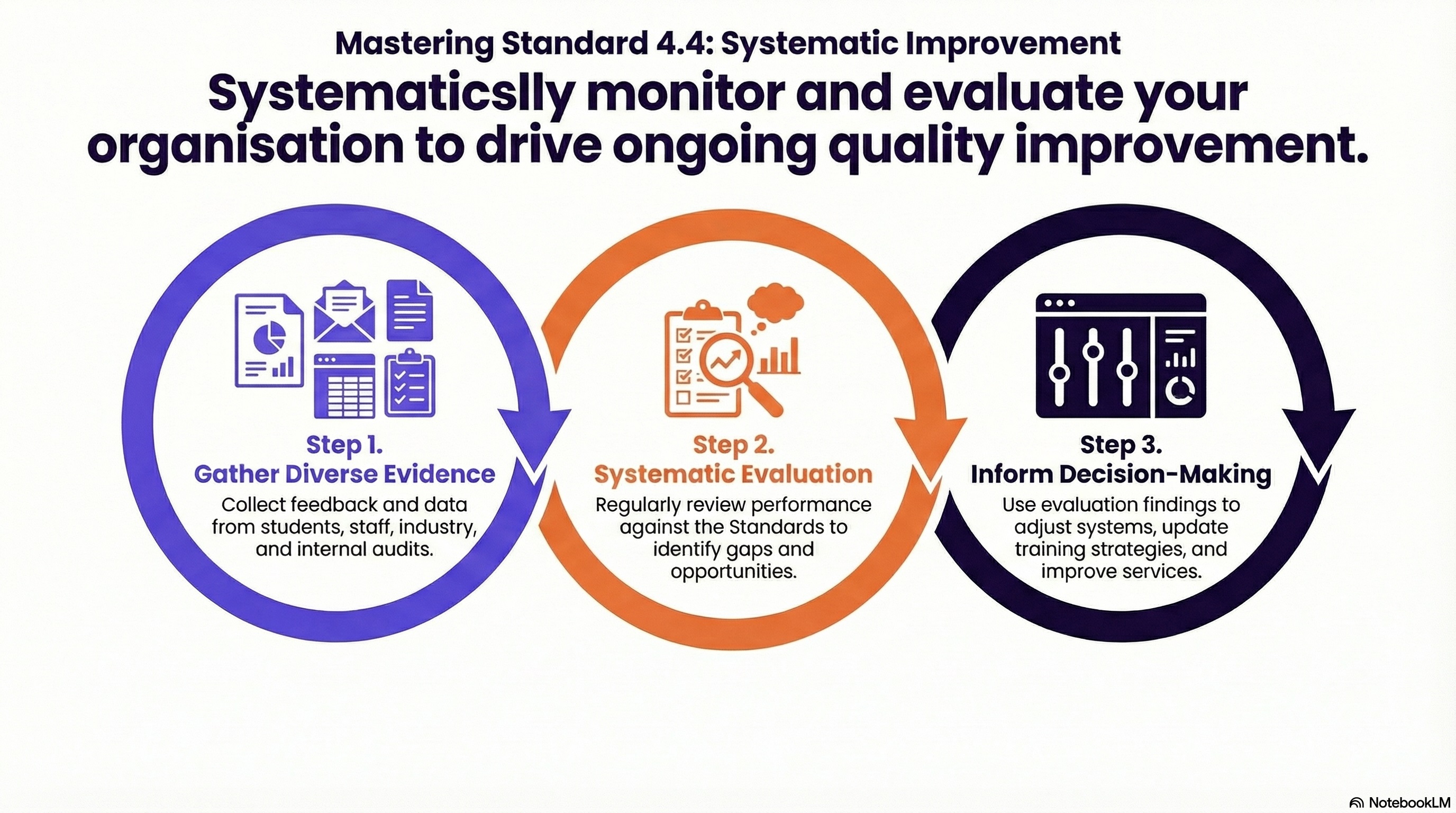

Systematic monitoring and evaluation is a structured approach that RTOs can use to continually test whether they are meeting the Standards and to identify adjustments needed to improve their services.

It involves moving away from the audit sprint (where everyone panics three months before re-registration) and moving toward a steady state of oversight. Practically, this entails:

Defining the what

Identifying which outcomes (e.g., student completion rates, employer satisfaction, or assessment validity) are critical to your RTO's mission

Determining the how

This is about choosing the specific methods and tools to collect data from stakeholders, such as students, staff, and employers

Creating (and sticking to!) a monitoring schedule

A documented calendar that ensures every Quality Area is reviewed at least once within a defined cycle (e.g., annually, quarterly or monthly)

Working with the data

Comparing different data sources (e.g., student feedback vs. trainer reflections vs. internal audit results) to identify common themes

Reviewing the data and feedback to measure the effectiveness of current systems and practices, which helps to identify concrete opportunities for improvement.

Closing the loop

The evidence gathered must be actively used to inform decision-making, adjust existing systems, and drive ongoing continuous improvement

Outcomes of these evaluations should be documented, reported, and communicated back to stakeholders and governing bodies, creating a feedback loop to ensure transparent and ongoing quality assurance

This approach supports quality by creating an environment where there are no surprises.

When an RTO monitors systematically, it has the ability to identify any compliance drift - which is the natural tendency for processes to become lax over time - before that drift impacts the student.

Systematic monitoring and evaluation ensures that if a resource is outdated or a trainer’s industry currency is slipping, the system catches it and triggers a fix. This is a proactive stance and works to protect the RTO's reputation. Importantly, it also ensures the student receives the high-calibre education they were promised.

(The audio is AI generated. It has been reviewed by a human)

Standard 4.4 focuses entirely on continuous improvement. At its core, the Standard states that an RTO undertakes systematic monitoring and evaluation to support the delivery of quality services and continuous improvement.

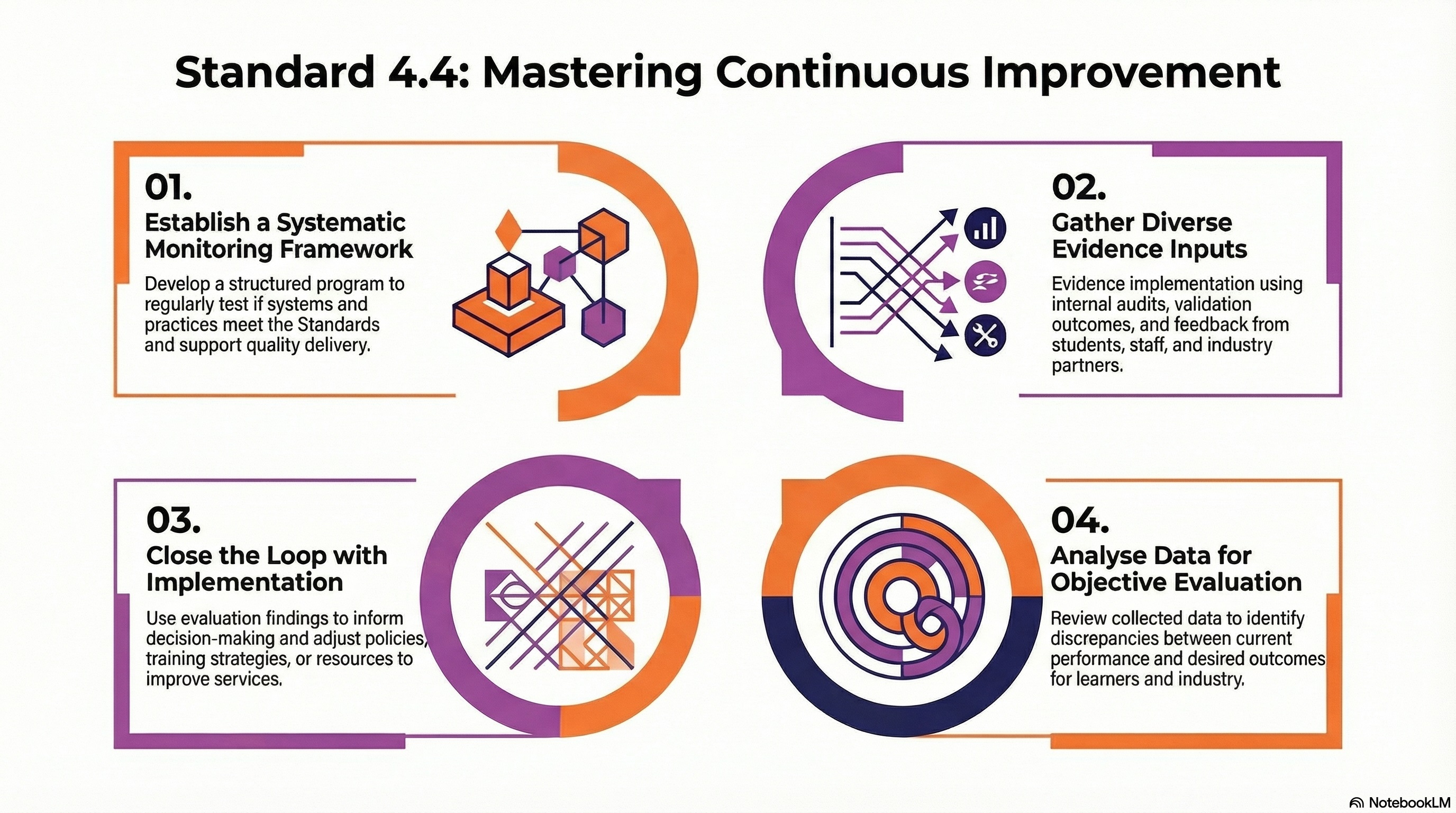

To achieve compliance, RTOs are expected to continually "self-assure"—meaning they must actively test whether they are meeting the Standards and identify any necessary adjustments to their systems, processes, or practices. Specifically, an RTO must demonstrate three key performance indicators:

A system for monitoring and evaluating its performance against the regulatory Standards.

Mechanisms for collecting and analysing data and feedback from relevant stakeholders, including students, staff, industry, employers, and regulators.

Evidence of how the outcomes of these evaluations are actively used to inform decision-making, adjust systems, and drive continuous improvement.

Outcome Standard 4.4 prioritises continuous improvement over simple quality assurance, shifting the RTO's focus from merely reacting to audits or complaints to taking a proactive, evidence-based approach to quality delivery.

By systematically monitoring and evaluating services, Standard 4.4 drives significant compliance and quality outcomes across the VET sector:

For students

It directly enhances learner outcomes by streamlining practices to reduce stress, applying modern and engaging teaching approaches, and adapting support services based on data-driven feedback.

For the VET workforce

It cultivates a positive workplace culture that promotes critical reflection, empowers staff to collaboratively solve problems, and builds robust, adaptable governance frameworks.

For employers and industry

It ensures that RTO training and assessment strategies remain faithfully aligned with current industry practices, fostering ongoing dialogue and increasing employer confidence in the education system.

For RTO operations

It builds operational efficiency, resilience, and adaptability, ensuring the RTO operates to a high standard of ethical decision-making and remains a thriving "learning organisation".

Ultimately, Outcome Standard 4.4 ensures that continuous improvement becomes an integral part of an RTO's day-to-day activities rather than an isolated, disruptive, or compliance-only event.

Its aim is to enable RTOs to be able to demonstrate:

Systematic monitoring

Consistent evaluation

Evidence of improvement

The ability to say “we’re a quality provider because we can prove it”

For providers, compliance with Standard 4.4 means demonstrating how they meet the following performance indicators:

This is requiring RTOs to be constantly looking for improvements in how they perform against all of the requirements laid out in the Outcome Standards.

Importantly, the key takeaway with this indicator is that RTOs are expected to show what they do to keep on top of things to ensure they are being the best they can be.

The expectation is for a system to:

Monitor performance - keep an eye on things

Evaluate performance - determine what went well and what can be improved

RTOs should consider how they:

Define quality

Monitor it

Measure it

Improve upon what they are doing

The VET regulator will be looking for a system that is planned, regular, and documented.

Section 185 “of the Act” refers to section 185 of the NVR Act 2011 which says the Federal Skills Minister as agreed by the Ministerial Council (comprised of State and Territory skills ministers), can make standards for RTOs to follow.

This is saying that it is not necessarily limited to just the Outcome Standards either. If the Minister introduces some other set of standards made under section 185 of the NVR Act, they will also apply.

Self-Assurance Framework - a high-level policy document describing how the RTO monitors itself

Annual Internal Audit schedule mapping out when each Outcome Standard will be reviewed

Compliance Calendar to track recurring deadlines (AVETMISS, Quality Indicator Surveys, Insurance renewals etc)

Remembering under self-assurance, RTOs can determine how they will meet requirements suited to their own context of operations, different RTOs may provide various forms of evidence.

Some ideas for this include:

A documented annual report on self-assurance presented to the Governing Body that summarises the year's monitoring activities, identified gaps, and the effectiveness of the fixes implemented

Internal audit reports as documented findings from your own system reviews and that show a clear trail from “observation" to "recommendation", and then “actioned”

Evidence of the system the RTO has in place

Evidence of monthly or quarterly "spot checks" on high-risk areas like assessment judgements or marketing claims

This is about using the outputs from M&E to make improvements. Closing the loop is the most common area where RTOs fail; they find the problem (monitoring) but never fix the root cause (improvement).

The expectation here is that RTOs will be able to show how they’ve used the feedback, data and other inputs to actually improve.

Continuous Improvement (CI) Register - importantly, make it a dynamic log that tracks the:

Issue

Action taken

Person accountable

Date of fix

And possibly, an evaluation of the fix

Action Plan Template which can be used for larger systemic changes (e.g., moving to a new LMS)

"You Said, We Did" reports which close the loop for stakeholders as they give a summary for students and staff showing how their feedback led to actual changes

The before and after trail as evidence of a process that was identified as "broken" in an internal audit and a subsequent record showing the updated process and improved student outcomes

Evidence of “closing the loop”. Similar to above, for example: Take one specific issue identified in a survey (e.g., "LMS navigation is confusing") and show the trail:

survey results → CI Register entry → staff workshop to redesign navigation → updated LMS → follow-up survey showing improved satisfaction

Completed Risk Treatment plan showing how a risk identified in Standard 4.3 was mitigated through a CI activity

Records showing that Governing Persons didn't just "note" the monitoring data but actively discussed the root causes and authorised the resources (time or money) to fix them

Here, the key word is “lawful”. This means that in the process of gathering information and feedback as data for continuous improvement efforts, the RTO must not do anything in that process that contravenes the legal rights of students, staff, employers and/or other stakeholders.

Whilst no specific privacy legislation is mentioned in this Standard, RTOs must operate in accordance with the 2025 Standards for RTOs and all other legislation.

This means the Privacy Act 1988 will apply to how personal information is collected and stored, as will other protocols for cyber security, data protection and access controls.

Further, RTOs must operate with integrity, maintain accountability, and have its leaders foster a culture of fairness and transparency, so any time data or feedback is collected, it should be accompanied by an explanation of why the data is being sought, what it will be used for, and how it will be stored, including for how long.

Lawful collection is synonymous with transparency, consent, and purpose. It means that every data point your RTO gathers, whether it’s a formal student survey, an employer interview, or digital "heatmaps" from your LMS, must be handled in strict compliance with:

The Privacy Act 1988 (including the 2024/25 Reforms) which now mandates that collection must be "fair and reasonable" in the circumstances

The NVR Act 2011 & Data Provision Requirements 2020 which compel the collection of Total VET Activity data

The Spam Act 2003 by ensuring stakeholders have the right to opt-out of non-mandatory feedback requests

State Funding Contracts some of which often have higher data-security and reporting benchmarks than the national standard.

Lawfulness extends to how you use the data after you have it. Analysing data "lawfully" means:

De-identification - which is stripping personal details (names, USIs) before sharing trends with the wider staff or industry to ensure privacy is maintained during the "evaluation" phase of Standard 4.4

Adhering to why the data was collected. For example, ensuring that data collected for continuous improvement isn't diverted for marketing purposes without explicit, separate consent

If you use AI or automated systems to analyse student data (e.g., to predict which students are "at risk" of dropping out), you must now disclose this in your Privacy Policy. Automated decision-making (ADM) is the process of making a decision by automated means, without human involvement. The decisions may be based on factual data as well as on digitally created profiles or inferred data. ADM may include “profiling” but does not have to. This obligation under amended privacy legislation comes into effect December 2026

Privacy Policy - updated to include a section on Automated Decision-Making (ADM) and the proposed new fair and reasonable collection test. The "Fair and Reasonable" test is a proposed amendment to Australia's Privacy Act 1988 requiring that the collection, use, and disclosure of personal information be inherently fair and reasonable, regardless of whether consent is obtained

Data Collection Notice (Template) as a short "Standard Disclosure" to be placed at the footer of all surveys, explaining the purpose, storage, and "Right to Access."

Data retention and destruction schedule mapping out exactly how long different types of data are kept (e.g., keeping assessment evidence for 2 years post-completion as per Compliance Standards)

Stakeholder Consent Matrix - a simple tool to track who has consented to what (e.g., "Yes to surveys, No to being featured in marketing clips"). Or, have this as a feature within the LMS with ability to pull reports on who is “yes”, who is “no”

Audit trail of consent showing that every student and staff member signed a Privacy Notice that was current at the time of collection

Privacy Impact Assessment (PIA) documentation showing the RTO reviewed the privacy risks before implementing a new data collection tool (like a new survey platform or AI analysis bot)

Evidence of how de-identified data was presented at a management meeting to drive a change, proving the analysis was both lawful and effective.

Secure access logs as evidence that only authorised staff (e.g., the Quality Manager) have access to the raw, sensitive feedback data, while trainers only see the analysed/aggregated results

A number of known risks to quality outcomes against Outcome Standard 4.4 have been identified.

Here are some ideas for how to mitigate those risks.

Risk: Not understanding legislative and regulatory obligations and requirements and how they apply to your operations. |

Mitigation ideas:

Ensure the Compliance Manager is subscribed to ASQA, State Authorities, and Skills Education news updates

Engage an external expert on a regular basis (e.g. one every 2 years) to "audit the auditors" and ensure internal standards haven't slipped or become siloed in understanding the requirements

Establish a "Regulatory Change Log" where all updates from ASQA, state authorities, or legislative bodies are recorded, analysed for impact on your specific RTO, and assigned to a staff member for action

Implement a "Standard of the Month" internal briefing where staff use the Education Matters Standards Hub to deep-dive into one Outcome Standard to ensure common understanding

Risk: Failing to have systematised approaches to self-assurance and monitoring, or only implementing improvements when notified of an upcoming regulatory activity. |

Mitigation ideas:

Schedule themed mini-audits every month rather than one massive audit once a year. This normalises self-reflection as a daily task, not a disruption. Themes could follow departments within the business, a Standard each time, or parts of the student journey

Risk: Not documenting and/or actioning areas for improvement identified from self-assurance, monitoring and analysis. |

Mitigation ideas:

Governing persons must review the "open items" on the CI Register at every monthly board meeting to ensure deadlines for improvements are being met

Ensure each item in the register is assigned to an accountable role for actioning the improvement

Link Continuous Improvement (CI) targets to performance reviews or professional development plans. When staff are recognised for "fixing the system," they are more likely to engage with the register

Risk: Failing to identify and implement continuous improvement opportunities across your entire scope of operations. |

Mitigation ideas:

Ensure your monitoring schedule explicitly includes "niche" or "low-enrolment" qualifications on your scope, not just the "cash cows" (those courses with lots of enrolments)

Ensure aspects of operations beyond training and assessment are included

Risk: Not providing staff with the opportunity to contribute to issues identification, continuous improvement activities and potential solutions. |

Mitigation ideas:

Create a culture of spotting and reporting ideas and opportunities so that staff feel comfortable and welcome to submit feedback and suggestions. As an example, our team of validators were awarded “Super Spotter” awards which were shared and celebrated within the team for the “best pick up” of existing issues in the training and assessment materials

Dedicate 10 minutes of every staff meeting to "What’s one thing we could do better?" and log every suggestion in the CI Register, regardless of whether it's actioned immediately

Risk: Relying on generic evaluation templates without contextualising the review to your organisation’s operations. |

Mitigation ideas:

Writing your own

Ensuring any purchased materials are reviewed and contextualised so that they make sense according to how the RTO actually operates

Risk: Not using multiple data collection and feedback points from stakeholders. |

Mitigation ideas:

Embed opportunities for students, staff and stakeholders to provide feedback on their entire experience with the RTO - and make the opportunities available via multiple means. E.g. via a webform, anonymous survey, paper-based questionnaire etc

Risk: Not ensuring that the collection and use data (including feedback) complies with legislative and regulatory obligations. |

Mitigation ideas:

Conduct a half-yearly review of where student data is stored and who has access. Ensure all digital survey tools are configured to comply with Australian Privacy Principles (APP)

Conduct regular training for staff on appropriate collection and handling of personal information and data

This section contains video-based snippets to better understand a concept

|

How the self-assurance model fits with ongoing RTO obligations |

| Suggested steps for ongoing improvements |

Available to purchase

Template: Continuous Improvement Register

Template: Basic Monitoring & Evaluation Framework

Guide: Getting Useful Data - Creating surveys that work

Remember to check the Templates section in the Standards Hub for basic templates that may be of use

[Download] ASQA's Practice Guide that covers Standard 4.4. This offers suggestions but does not impose legal or compliance obligations: Quality Area 4 Practice Guide - Continuous Improvement.pdf

[Website] Legislation - National Vocational Education and Training Regulator (Outcome Standards for Registered Training Organisations) Instrument 2025 (the "Outcome Standards")

[Website] Legislation National Vocational Education and Training Regulator (Data Provision Requirements) Instrument 2020

[Website] Legislation Privacy Act 1988

[Website] Legislation Spam Act 2003

[Article link] How does 'self-assurance' fit in to the new requirements?

[Article link] How the DDEER Model can help self-assurance

[Download] Automated Decision-Making - Better Practice Guide, Commonwealth Ombudsman, March 2025.pdf

[Website] 7 process improvement methodologies to improve efficiency

[Website] 9 Continuous Improvement Methodologies & Tools

[Website] Continuous Improvement Models: A Practical Guide

[Website] The continuous improvement process: Key steps, methodologies & benefits

Your membership includes access to the following related modules in the Learning and Resources Hub:

This section contains PD from the Skills Education archives. The video has been chosen for its relevance to the topic

|

The importance of closing the loop on continuous improvement efforts - and examples from an auditor and RTO owner |

Following are some of the Skills Education professional development options that support requirements from Outcome Standard 4.4.

Depending on your membership level, you may already have access.

When you click on the items, it will either take you straight your dashboard (meaning your membership has access included) or a payment page (meaning this item isn't part of your membership level).

Select from the PD Library category:

Standards Hub for Education Matters members (c) MAE Projects, 2025-2026

- Updated 23.3.26

Content is provided as professional development information and to assist VET practitioners and RTOs to understand requirements under the 2025 Standards for RTOs. Every effort is made to ensure accuracy, but this information is not a substitute for independent legal, regulatory compliance or professional advice.